The Letter below from Marty Keller and colleagues was sent to many media outlets, to retraction watch, and to professional organizations on Wednesday. Paul Basken from the Chronicle for Higher Education asked me for a response which I sent about an hour after receiving the letter. This response is from me rather than the 329 group.

One quick piece of housekeeping. Restoring Study 329 is not about giving paroxetine to adolescents – it’s about all drugs for all indications across medicine and for all ages. It deals with standard Industry MO to hype benefits and hide harms. One of the best bits of coverage of this aspect of the story yesterday was in Cosmopolitan.

Letter From Keller et al

Dear

Nine of us whose names are attached to this email (we did not have time to create electronic signatures) were authors on the study originally published in 2001 in the Journal of the American Academy of Child and Adolescent Psychiatry entitled, “Efficacy of paroxetine in the treatment of adolescent major depression: a randomized controlled trial,” and have read the reanalysis of our article, which is entitled, “Restoring Study 329: efficacy and harms of paroxetine and imipramine in treatment of major depression in adolescence”, currently embargoed for publication in the British Medical Journal (BMJ) early this week. We are providing you with a brief summary response to several of the points in that article that which with we have strong disagreement. Given the length and detail of the BMJ publication and the multitude of specific concerns we have with its approach and conclusions, we will be writing and submitting to the BMJ’s editor an in-depth letter rebutting the claims and accusations made in the article. It will take a significant amount of work to make this scholarly and thorough and do not have a time table; but that level of analysis by us far exceeds the time frame needed to give you that more comprehensive response by today.

The study was planned and designed between 1991-1992. Subject enrollment began in 1994, and was completed in 1997, at which time analysis of the data commenced. The study authors comprised virtually all of the academic researchers studying the treatment of child depression in North America at the time. The study was designed by academic psychiatrists and adopted with very little change by GSK, who funded the study in an academic / industry partnership. The two statisticians who helped design the study are among the most esteemed in psychiatry. The goal of the study designers was to do the best study possible to advance the treatment of depression in youth, not primarily as a drug registration trial. Some design issues would be made differently today — best practices methodology have changed over the ensuing 24-year interval since inception of our study.

In the interval from when we sat down to plan the study to when we approached the data analysis phase, but prior to the blind being broken, the academic authors, not the sponsor, added several additional measures of depression as secondary outcomes. We did so because the field of pediatric-age depression had reached a consensus that the Hamilton Depression Rating Scale (our primary outcome measure) had significant limitations in assessing mood disturbance in younger patients. Accordingly, taking this into consideration, and in advance of breaking the blind, we added secondary outcome measures agreed upon by all authors of the paper. We found statistically significant indications of efficacy in these measures. This was clearly reported in our article, as were the negative findings.

In the “BMJ-Restoring Study 329 …” reanalysis, the following statement is used to justify non-examination of a range of secondary outcome measures:

Both before and after breaking the blind, however, the sponsors made changes to the secondary outcomes as previously detailed. We could not find any document that provided any scientific rationale for these post hoc changes and the outcomes are therefore not reported in this paper.

This is not correct. The secondary outcomes were decided by the authors prior to the blind being broken. We believe now, as we did then, that the inclusion of these measures in the study and in our analysis was entirely appropriate and was clearly and fully reported in our paper. While secondary outcome measures may be irrelevant for purposes of governmental approval of a pharmaceutical indication, they were and to this day are frequently and appropriately included in study reports even in those cases when the primary measures do not reach statistical significance. The authors of “Restoring Study 329” state “there were no discrepancies between any of our analyses and those contained in the CSR [clinical study report]”. In other words, the disagreement on treatment outcomes rests entirely on the arbitrary dismissal of our secondary outcome measures.

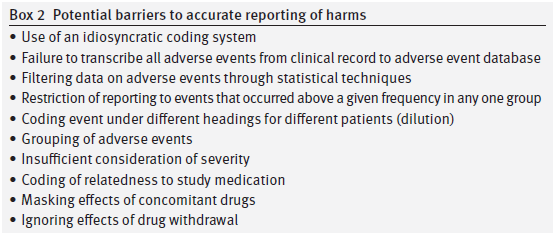

We also have areas of significant disagreement on the “Restoring Study 329” analysis of side effects (which the author’s label “harms”). Their reanalysis uses the FDA MedDRA approach to side effect data, which was not available when our study was done. We agree that this instrument is a meaningful advance over the approach we used at the time, which was based on the FDA’s then current COSTART approach. That one can do better reanalyzing adverse event data using refinements in approach that have accrued in the 15 years since a study’s publication is unsurprising and not a valid critique of our study as performed and presented.

A second area of disagreement (concerning the side effect data) is with their statement, “We have not undertaken statistical tests for harms.” The authors of “Restoring Study 329” with this decision are saying that we need very high and rigorous statistical standards for declaring a treatment to be beneficial but for declaring a treatment to be harmful then statistics can’t help us and whatever an individual reader thinks based on raw tabulation that looks like a harm is a harm. Statistics of course does offer several approaches to the question of when is there a meaningful difference in the side effect rates between different groups. There are pros and cons to the use of P values, but alternatives like confidence intervals are available.

“Restoring Study 329” asserts that this paper was ghostwritten, citing an early publication by one of the coauthors of that article. There was absolutely nothing about the process involved in the drafting, revision, or completion of our paper that constitutes “ghostwriting”. This study was initiated by academic investigators, undertaken as an academic / industry partnership, and the resulting report was authored mainly by the academic investigators with industry collaboration.

Finally the “Restoring Study 329” authors discuss an initiative to correct publications called “restoring invisible and abandoned trials (RIAT)” (BMJ, 2013; 346-f4223). “Restoring Study 329” states “We reanalyzed the data from Study 329 according to the RIAT recommendations” but gives no reference for a specific methodology for RIAT reanalysis. The RIAT approach may have general “recommendations” but we find no evidence that there is a consensus on precisely how such a RIAT analysis makes the myriad decisions inherent in any reanalysis nor do we think there is any consensus in the field that would allow the authors of this reanalysis or any other potential reanalysis to definitively say they got it right.

In summary, to describe our trial as “misreported” is pejorative and wrong, both from consideration of best research practices at the time, and in terms of a retrospective from the standpoint of current best practices.

Martin B. Keller, M.D.

Boris Birmacher, M.D.

Gregory N. Clarke, Ph.D.

Graham J. Emslie, M.D.

Harold Koplewicz, M.D.

Stan Kutcher, M.D.

Neal Ryan, M.D.

William H. Sack, M.D.

Michael Strober, Ph.D.

Response

In the case of a study designed to advance the treatment of depression in adolescents, it seems strange to have picked imipramine 200-300mg per day as a comparator, unusual to have left the continuation phase unpublished, odd to have neglected to analyse the taper phase, dangerous to have downplayed the data on suicide risks and the profile of psychiatric adverse events more generally and unfortunate to have failed to update the record in response to attempts to offer a more representative version of the study to those who write guidelines or otherwise shape treatment.

As regards the efficacy elements, the correspondence we had with GSK, which will be available on Study329.org as of Sept 16 and on the BMJ website, indicates clearly that we made many efforts to establish the basis for introducing secondary endpoints not present in the protocol. GSK have been unwilling or unable to provide evidence on this issue, even though the protocol states that no changes will be permitted that are not discussed with SmithKline. We would be more than willing to post any material that Dr Keller and colleagues can provide.

Whatever about such material, it is of note that when submitting Study 329 to FDA in 2002, GSK described the study as a negative Study and FDA concurred that it was negative. This is of interest in the light of Dr Keller’s hint that it was GSK’s interests to submit this study to regulators that led to a corruption of the process.

Several issues arise as regards harms. First, we would love to see the ADECs coding dictionary if any of the original investigators have one. Does anyone know whether ADECs requires suicidal events to be coded as emotional lability or was there another option?

Second, can the investigators explain why headaches were moved from classification under Body as a Whole in the Clinical Study Report to sit alongside emotional lability under a Nervous System heading in the 2001 paper?

It may be something of purist view but significance testing was originally linked to primary endpoints. Harms are never the primary endpoint of a trial and no RCT is designed to detect harms adequately. It is appropriate to hold a company or doctors who may be aiming to make money out of vulnerable people to a high standard when it comes to efficacy but for those interested to advance the treatment of patients with any medical condition it is not appropriate to deny the likely existence of harms on the basis of a failure to reach a significance threshold that the very process of conducting an RCT will mean cannot be met as investigators attention is systematically diverted elsewhere.

As regards RIAT methods, a key method is to stick to the protocol. A second safeguard is to audit every step taken and to this end we have attached a 61 page audit record (Appendix 1) to this paper. An even more important method is to make the data fully available, which it will be on Study329.org.

As regards ghostwriting, I personally am happy to stick to the designation of this study as ghostwritten. For those unversed in these issues, journal editors, medical writing companies and academic authors cling to a figleaf that if the medical writers name is mentioned somewhere, s/he is not a ghost. But for many, the presence on the authorship line of names that have never had access to the data and who cannot stand over the claims made other than by assertion is what’s ghostly.

Having made all these points, there is a point of agreement to note. Dr Keller and colleagues state that:

“nor do we think there is any consensus in the field that would allow the authors of this reanalysis or any other potential reanalysis to definitively say they got it right”.

We agree. For us, this is the main point behind the article. This is why we need access to the data. It is only with collaborative efforts based on full access to the data that we can manage to get to a best possible interpretation but even this will be provisional rather than definitive. Is there anything that would hold the authors of the second interpretation of these data (Keller and colleagues) back from joining with us the authors of the third interpretation in asking that the data of all trials for all treatments, across all indications, be made fully available? Such a call would be consistent with the empirical method that was as applicable in 1991 as it is now.

David Healy

Holding Response on Behalf of RIAT 329